Instagram executives have said they are "heartbroken" over the reported suicide of a teenager in Malaysia who had posted a poll to its app.

The 16-year-old is thought to have killed herself hours after asking other users whether she should die.

But the technology company's leaders said it was too soon to say if they would take any action against account holders who took part in the vote.

The Instagram chiefs were questioned about the matter in Westminster.

They were appearing as part of an inquiry by the UK Parliament's Digital, Culture, Media and Sport Committee into immersive and addictive technologies.

Reports indicate the unnamed teenager killed herself on Monday, in the eastern state of Sarawak.

The local police have said that she had run a poll on the photo-centric platform asking: "Really important, help me choose D/L." The letters D and L are said to have represented "die" and "live" respectively.

This took advantage of a feature introduced in 2017 that allows users to pose a question via a "sticker" placed over one of their photos, with viewers asked to tap on one of two possible responses. The app then tallies the votes.

At one point, more than two-thirds of respondents had been in favour of the 16-year-old dying, said district police chief Aidil Bolhassan.

"The news is certainly very shocking and deeply saddening," Vishal Shah, head of product at Instagram, told MPs.

"There are cases... where our responsibility around keeping our community safe and supportive is tested and we are constantly looking at our policies.

"We are deeply looking at whether the products, on balance, are matching the expectations that we created them with.

"And if, in cases like the polling sticker, we are finding more evidence where it is not matching the expectations... we are looking to see whether we need to make some of those policy changes."

The two Instagram executives are normally based in Instagram's California offices

His colleague Karina Newton, Instagram's head of public policy, told the MPs the poll would have violated the company's guidelines.

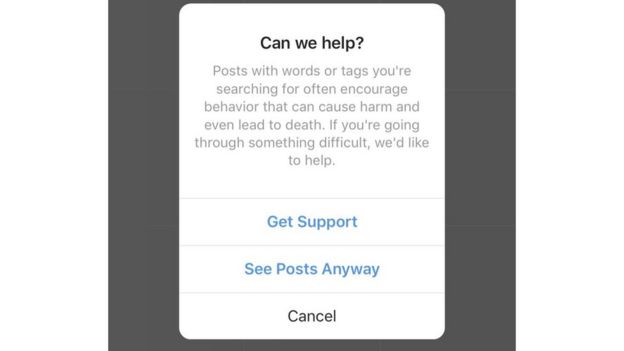

The platform has measures in place to detect "self-harm thoughts" and seeks to remove certain posts while offering support where appropriate.

For example, if a user searches for the word "suicide", a pop-up appears offering to put them in touch with organisations that can help.

But Mr Shah said that the way people expressed mental-health issues was constantly evolving, posing a challenge.

Damian Green, who chairs the committee, asked the two if the Facebook-owned service could adapt some of the tools it had developed to target advertising to proactively identify people at risk of self-harm and reach out to them.

Instagram already features a pop-up that appears if a user searches for "suicide"

"Would it not be possible, where there are cases of people known to have been engaged in harmful content and [who] may have been at risk, that analysis could be done to see what other users share similar characteristics?" the MP asked.

Ms Newton replied that there were privacy issues to consider but that the company was seeking to do more to address the problem.

Mr Green also asked if Instagram might consider suspending or cancelling the accounts of those who had encouraged the girl to take her life.

But the executives declined to speculate on what steps would be taken.

"I hope you can understand that it is just so soon. Our team is looking into what the content violations are," said Ms Newton.

Under Malaysian law, anyone found guilty of encouraging or assisting the suicide of a minor can be sentenced to death or up to 20 years in jail.

It follows the earlier case of Molly Russell, a 14-year-old British girl who killed herself, in 2017, after viewing distressing material about depression and suicide that had been posted to Instagram.

The social network vowed to remove all graphic images of self-harm from its platform after her father accused the app of having "helped kill" his child.